The Kirkpatrick model

Discover the Kirkpatrick model and its benefits. Learn how to use it to evaluate training in your organization effectively.

This guide will introduce the Kirkpatrick Model and the benefits of using this model in your training program. After reading this guide, you will be able to effectively use it to evaluate training in your organization.

Discover:

What is the Kirkpatrick model?

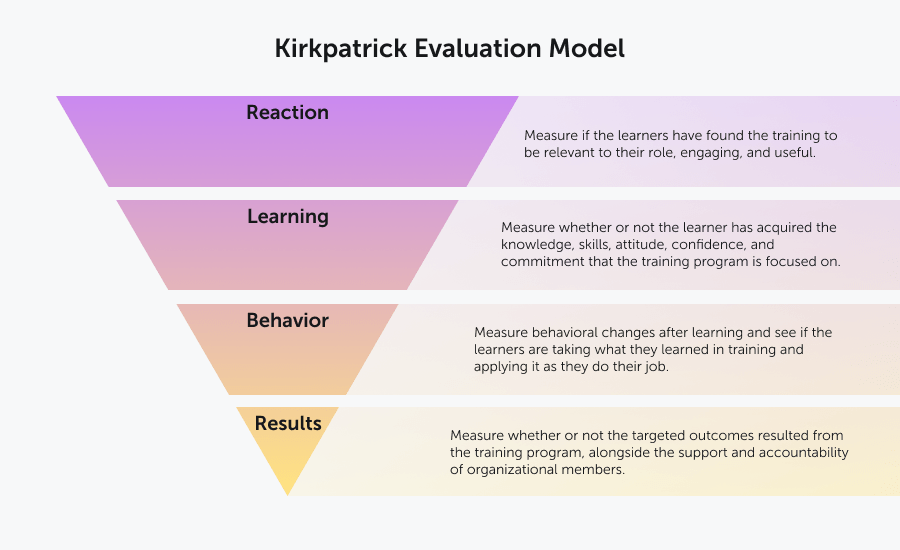

The Kirkpatrick model, also known as Kirkpatrick’s Four Levels of Training Evaluation, is a key tool for evaluating the efficacy of training within an organization. This model is globally recognized as one of the most effective evaluations of training.

The Kirkpatrick model consists of 4 levels: Reaction, learning, behavior, and results.

It can be used to evaluate either formal or informal learning and can be used with any style of training.

The Kirkpatrick Model has been widely used since Donald Kirkpatrick first published the model in the 1950s and has been revised and updated 3 times since its introduction. In 2016, it was updated into what is called the New World Kirkpatrick Model, which emphasized how important it is to make training relevant to people’s everyday jobs.

Use learning data to accelerate change

Understand learning data and receive a practical tool to help apply this knowledge in your company.

Download workbookThe Kirkpatrick model

Level 1: Reaction

The first level is learner-focused. It measures if the learners have found the training to be relevant to their role, engaging, and useful.

There are three parts to this:

- Satisfaction: Is the learner happy with what they have learned during their training?

- Engagement: How much did the learner get involved in and contribute to the learning experience?

- Relevance: How much of this information will learners be able to apply on the job?

Reaction is generally measured with a survey, completed after the training has been delivered. This survey is often called a ‘smile sheet’ and it asks the learners to rate their experience within the training and offer feedback.

Some of the areas that the survey might focus on are:

- Program objectives

- Course materials

- Content relevance

- Facilitator knowledge

Tips for implementing level 1: reaction

- Use an online questionnaire.

- Set aside time at the end of training for learners to fill out the survey.

- Provide space for written answers, rather than multiple choice.

- Pay attention to verbal responses given during training.

- Create questions that focus on the learner’s takeaways.

- Use information from previous surveys to inform the questions that you ask.

- Let learners know at the beginning of the session that they will be filling this out. This allows them to consider their answers throughout and give more detailed responses.

- Reiterate the need for honesty in answers – you don’t need learners giving polite responses rather than their true opinions!

Level 2: Learning

This level focuses on whether or not the learner has acquired the knowledge, skills, attitude, confidence, and commitment that the training program is focused on.

These 5 aspects can be measured either formally or informally.

For accuracy in results, pre and post-learning assessments should be used.

Tips for implementing level 2: learning

- Conduct assessments before and after for a more complete idea of how much was learned.

- Questionnaires and surveys can be in a variety of formats, from exams, to interviews, to assessments.

- In some cases, a control group can be helpful for comparing results.

- The scoring process should be defined and clear and must be determined in advance in order to reduce inconsistencies.

- Make sure that the assessment strategies are in line with the goals of the program.

- Don’t forget to include thoughts, observations, and critiques from both instructors and learners – there is a lot of valuable content there.

Training evaluation form

Get a handy printable form for evaluating training and course experiences.

Download nowLevel 3: Behavior

This step is crucial for understanding the true impact of the training.

It measures behavioral changes after learning and shows if the learners are taking what they learned in training and applying it as they do their job.

It also looks at the concept of required drivers. That is, “processes and systems that reinforce, encourage and reward the performance of critical behaviors on the job.”

The results of this assessment will demonstrate not only if the learner has correctly understood the training, but it also will show if the training is applicable in that specific workplace.

This is because, often, when looking at behavior within the workplace, other issues are uncovered. If a person does not change their behavior after training, it does not necessarily mean that the training has failed.

It might simply mean that existing processes and conditions within the organization need to change before individuals can successfully bring in a new behavior.

Tips for implementing level 3: behavior

- The most effective time period for implementing this level is 3 – 6 months after the training is completed. Any evaluations done too soon will not provide reliable data.

- Use a mix of observations and interviews to assess behavioral change.

- Be aware that opinion-based observations should be minimized or avoided, so as not to bias the results.

- To begin, use subtle evaluations and observations to evaluate change. Once the change is noticeable, more obvious evaluation tools, such as interviews or surveys, can be used.

- Have a clear definition of what the desired change is – exactly what skills should be put into use by the learner? How is mastery of these skills demonstrated?

- Other questions to keep in mind are the degree of change and how consistently the learner is implementing the new skills. Will this be a lasting change?

- Evaluations are more successful when folded into present management and training methods.

Level 4: Results

This level focuses on whether or not the targeted outcomes resulted from the training program, alongside the support and accountability of organizational members.

For each organization, and indeed, each training program, these results will be different, but can be tracked using Key Performance Indicators. Some examples of common KPIs are increased sales, decreased workers comp claims, or a higher return on investments.

This level also includes looking at leading indicators. These are “short-term observations and measurements suggesting that critical behaviors are on track to create a positive impact on desired results.”

Tips for implementing level 4: results

- Before starting this process, you should know exactly what is going to be measured throughout, and share that information with all participants.

- If possible, use a control group.

- Don’t rush the final evaluation – it’s important that you give participants enough time to effectively fold in the new skills.

- It is key that observations are made properly, and that observers understand the training type and desired outcome.

- You can ask participants for feedback, but this should be paired with observations for maximum efficacy.

- Especially in the case of senior employees, yearly evaluations and consistent focus on key business targets are crucial to the accurate evaluation of training program results.

At all levels within the Kirkpatrick Model, you can clearly see results and measure areas of impact. This analysis gives organizations the ability to adjust the learning path when needed and to better understand the relationship between each level of training. The end result will be a stronger, more effective training program and better business results.

Use learning data to accelerate change

Understand learning data and receive a practical tool to help apply this knowledge in your company.

Download workbook